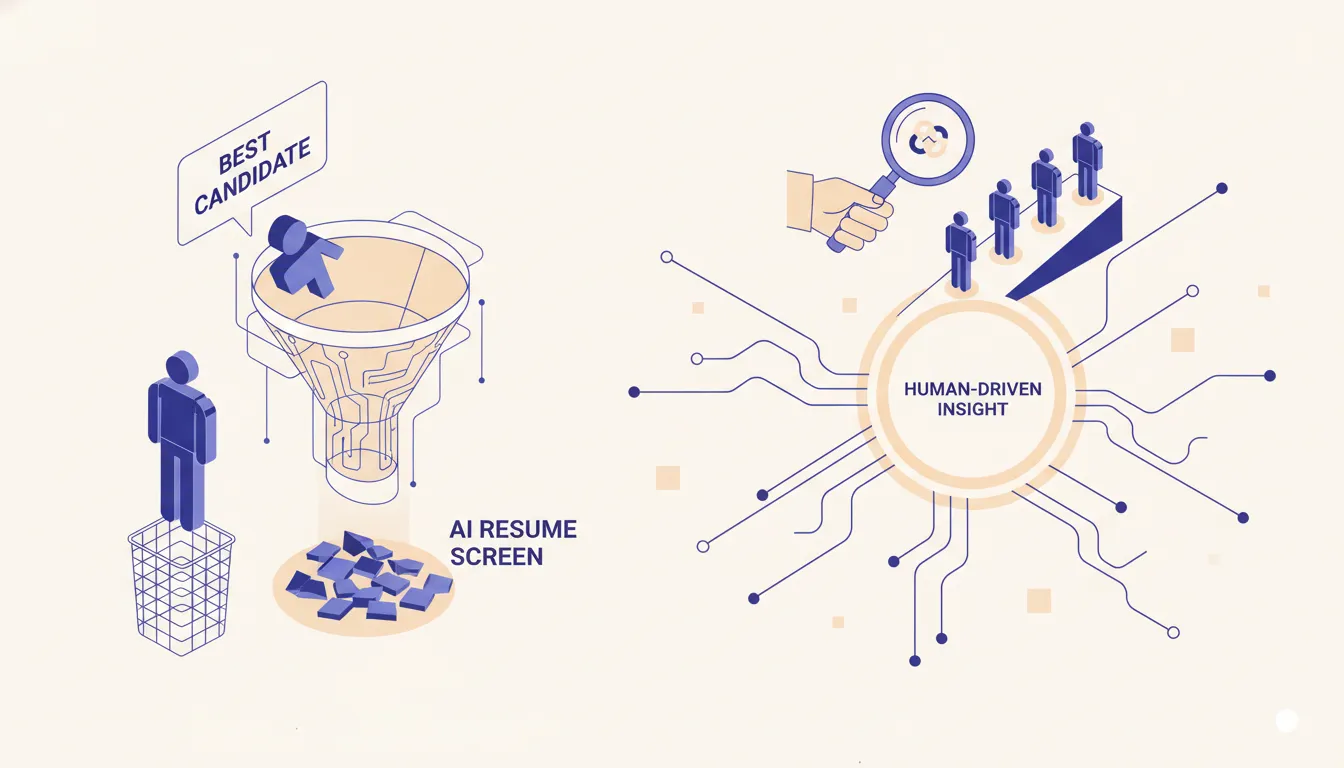

AI resume screening filters out your best candidates

AI resume screening tools are sold as efficiency. The published research shows they reject 27 million qualified candidates in the United States alone. The fix is not a smarter screener. It is a different architecture.

--

The 27 million number that nobody selling AI screening software wants to talk about

In 2021 a research team led by Joseph Fuller at Harvard Business School published a study called Hidden Workers: Untapped Talent. The headline finding, reported by the Harvard Gazette and the HBS Managing the Future of Work program, was that automated resume screeners were systematically rejecting more than 27 million qualified workers in the United States alone. Workers who could do the job. Workers who applied for the job. Workers whose resumes never reached a human because a software filter decided they did not match.

In 2021 a research team led by Joseph Fuller at Harvard Business School published a study called Hidden Workers: Untapped Talent. The headline finding, reported by the Harvard Gazette and the HBS Managing the Future of Work program, was that automated resume screeners were systematically rejecting more than 27 million qualified workers in the United States alone. Workers who could do the job. Workers who applied for the job. Workers whose resumes never reached a human because a software filter decided they did not match.

That study was about the keyword-era applicant tracking system. The boolean filter that rejects you because your resume says "client service" instead of "customer service." Five years later, every vendor in the recruiting tech stack has rebranded around AI. Embeddings, LLMs, fit scores, semantic matching. The pitch is that the keyword problem is solved. The pitch is wrong.

The keyword problem is not solved. It has been replaced with a problem that is harder to detect and harder to argue with. A keyword filter that rejects you for missing a phrase is at least legible. You can read the job description, see the keyword, see your resume, and understand the rejection. An embedding-based screener that rejects you because your resume vector falls outside a similarity threshold to whatever the model was tuned on is opaque. The candidate cannot see the criterion. The recruiter usually cannot either. The hidden workers got more hidden.

This post is for recruiting firms running 1 to 10 desks and in-house TA teams of 10 to 150 people. It is a contrarian take. The thesis is that almost every AI resume screener on the market today, sold as the cure for the keyword era, inherits the same architectural mistake that produced the 27 million number, and that the right move for a small recruiting operation is not to buy a smarter screener. It is to build a different shape of system, where the model reads resumes and a human (or a rubric you wrote) makes the call.

What "AI resume screening" actually means in 2026

Strip the marketing and AI resume screening on the market today resolves to one of four architectures, with very different failure modes.

Strip the marketing and AI resume screening on the market today resolves to one of four architectures, with very different failure modes.

Keyword expansion with synonyms. The oldest pattern. The vendor expands the recruiter's keyword list through a thesaurus or a learned synonym model. Resume says "PostgreSQL," role asks for "Postgres," the system matches. This is the entire AI story for a meaningful number of "AI-powered" ATS add-ons. The failure mode is the same as the keyword era: if the candidate's experience is described in language the synonym list does not cover, they get filtered.

Embedding-based similarity scoring. The resume and the job description get embedded into vectors. The system computes cosine similarity and ranks candidates. The failure mode is subtle. The model does not know what makes a candidate qualified. It knows what previously hired candidates' resumes looked like, in aggregate. If your past hires share a writing style, an alma mater pattern, or a specific career arc, the embedding model picks up on it and rewards candidates whose resumes look like the template. Hidden workers, by definition, do not look like the template.

LLM-based scoring. The resume and job description go to a language model with a prompt asking for a fit score. The model returns a number, a justification, and sometimes a tag. Language models are exquisitely sensitive to surface features of resumes: formatting, phrasing, the presence of a degree from a brand-name school, the gap on the timeline. A candidate with three years out of the workforce raising kids and a 2018 bootcamp credential will frequently underscore a candidate with an unbroken Goldman track record, even when the job calls for the bootcamp graduate's stack. The model is not biased in a programmatic sense. It is responding to the patterns in its training data, which encode every hiring bias of the last decade.

Hybrid systems. Most vendors layer the above. Embedding pre-filter, then LLM scoring on the survivors, then keyword overrides. The failure modes compound. By the time a candidate has cleared three filters tuned on patterns from past hiring, the surviving pool is statistically similar to the past hires, which is the definition of regression to the mean of an industry that already filters out 27 million qualified workers.

The point is not that any individual technique is broken. Each is useful for the right narrow purpose. The point is that the product shape -- feed me resumes, give me a ranked shortlist with auto-reject -- forces all four architectures into the same failure pattern. The decision is being made by the model. The recruiter is downstream of the rejection, not upstream of it.

Why a 5-recruiter shop ends up running enterprise rejection logic

There is a structural reason small recruiting firms inherit the worst of this. The market for AI screening is built around enterprise procurement, where the buyer is processing 50,000 applications per quarter and needs a defensible filter. When the same product is sold downstream to boutique firms and small in-house teams, the volume profile is wildly different but the auto-reject defaults are the same.

A boutique firm placing 30 candidates a year on $150K roles cannot afford to silently reject the right candidate. The economics are exactly inverted from the enterprise. At enterprise volume, a 5% false-negative rate costs candidates the company never would have noticed. At boutique volume, the same rate is a missed placement, which is a missed fee, which is real money. The boutique needs the highest-recall screener, not the highest-precision one. The market does not sell that, because the market is calibrated for enterprise.

The other half of the structural problem is that small firms rarely have the technical capacity to evaluate what they are buying. The vendor demo shows a clean shortlist. It does not show the rejected pool. The procurement decision happens on the shortlist. By the time the firm notices that strong candidates are missing, the relationship is six months in and the integration is built.

The legal layer that vendors will not absorb for you

In May 2023 the EEOC published a technical assistance document on the use of AI tools in employment decisions, and announced it through an official EEOC bulletin. The document made several things clear.

Title VII applies to AI-based hiring tools. The four-fifths rule from the Uniform Guidelines on Employee Selection Procedures is the threshold the agency uses to assess adverse impact. An employer using a vendor's AI tool is responsible for the discriminatory outcome if one is found, regardless of what the vendor's contract says about their own non-liability. The vendor's promise that the model is bias-free has no weight in an EEOC review. The employer holds the bag.

For a 5-recruiter shop, that is a sentence worth reading three times. The shop signed up for a screening tool to save time. The contract says the vendor is not liable for adverse outcomes. The federal regulator says the shop is liable. The math is that the shop has accepted a legal risk that almost no boutique can afford to defend against in litigation, in exchange for an efficiency gain that, per the Harvard data, is producing false negatives at scale.

The asymmetry is brutal. A vendor selling to enterprise can absorb the cost of one EEOC complaint by retreating into legal defense, settling, and updating the model. A 5-recruiter shop cannot. One complaint, even one that resolves favorably, is months of operational distraction and legal fees that the firm did not budget for. Most boutique firms are not auditing their rejected pool against the four-fifths rule. Most have no idea where they stand.

This is not theoretical. State-level enforcement is running ahead of the federal posture in places like New York City, where Local Law 144 has required bias audits on automated employment decision tools since July 2023. California, Illinois, and Colorado have all moved on similar legislation. The trajectory is one direction. If the screener you are running today does not have an audit you could hand to a regulator, you are exposed.

The architecture that fixes this: structured signal extraction

The replacement pattern is straightforward and has been within reach of small recruiting shops since the cost of language model inference dropped in 2024. The model reads the resume. The model does not score the candidate. The model extracts a defined set of facts into a schema, and the schema goes to the recruiter.

A reasonable extraction schema for a recruiting workflow looks like this. Total years of professional experience, broken down by role family. Skills explicitly mentioned, with a confidence rating that distinguishes "listed in skills section" from "demonstrated in a job description." Employment dates with gap durations and any stated reasons for gaps. Education and certifications with dates. Geographic location and remote-work history. Industry tags. Salary expectations if stated. Authorization-to-work signals if stated. Specific notable accomplishments quoted directly from the resume.

That schema is the entire output. There is no fit score. There is no "advance" or "reject" tag. The model is not asked whether the candidate is good. It is asked what the resume says.

The recruiter sees a screen with the structured fields beside the original resume. They can sort, filter, and search. They can apply their own rubric -- "anyone with at least four years in the relevant role family, no unexplained gap longer than 18 months, located within commuting distance, makes the first round" -- and the rubric runs against the structured fields, not against the model's opinion of fit. The recruiter is upstream of the rejection. The 27 million hidden workers do not get filtered out by a black box.

The technical implementation is small. A webhook on a new candidate event in the ATS. A serverless function that calls Claude, GPT, or any LLM with structured outputs and the extraction prompt. A write-back to custom fields on the candidate record. A view in the ATS that surfaces the structured output. For a Bullhorn or Greenhouse-shaped ATS, this is days of work, not weeks. The recurring cost is API calls plus a small amount of infrastructure -- typically low three figures per month at boutique volume.

The audit log is the legal posture

The build above ends in one step that is more important than the rest: log every extraction with the model version, the schema version, and the resume hash. With logged extractions the firm can audit its rejected pool against the four-fifths rule any time. The recruiter's rubric is written down. The model's role is bounded to extraction. If a regulator asks how the screening decision was made, the answer is "the recruiter applied this rubric to these extracted fields, and here is the audit log." That is a defensible posture in a way that "the AI told us they were not a good fit" is not.

The shape of the work is not enterprise procurement. It is a custom build that takes a few days, runs on a few hundred dollars a month, and stays the firm's property. The reason this has not been the default architecture for boutique recruiting is that the firms selling AI screening software make their margin on the SaaS subscription, not on the build. They have no incentive to tell a 5-recruiter shop that the right tool is a Tuesday-afternoon n8n workflow plus a Claude prompt.

The line to draw

The pattern generalizes beyond recruiting. Wherever a language model has been inserted between an applicant and a human reviewer, the question is whether the model is extracting facts for a human to act on, or making the decision. When the model decides, the system inherits every bias in the training data, every quirk in the prompt, every drift in the model version, and the rejected party has no path to challenge an opaque score. When the model extracts and a human or a written rubric decides, the system is auditable and the failure modes are visible.

For any small recruiting firm or in-house TA team currently running an AI screener, the move is to audit which decisions the model is being asked to make. Anywhere the model is producing a fit score and an auto-reject threshold is doing it, replace it with structured extraction and a recruiter-applied rubric. The throughput cost is real. The improvement in candidate quality, in audit posture, and in win rate against enterprise competitors is also real, and it compounds.

If you are running a recruiting operation and want a specific read on how your current screening stack would hold up to a four-fifths-rule audit, or how a structured-extraction workflow would slot into your existing ATS, the AI Operations X-Ray is 90 seconds and free, and it returns a five-item ranked report on the automation moves that move the needle most for your shape of business. If you are weighing whether to build a custom workflow versus extend an existing platform, the recruiting automation playbook covers the decision in depth, and the Claude agent versus framework comparison covers the build-versus-buy math at the layer below it.

The 27 million number is not going to fix itself. The vendors selling screeners are not going to volunteer that their architecture is the problem. The boutique firms that figure this out first will be the ones placing the candidates that everyone else's filter rejected.

Frequently asked questions

- Are AI resume screeners actually rejecting good candidates, or is this an old complaint about keyword ATSes?

- Both, and that is the point. The Harvard Business School study that put the figure at 27 million hidden workers in the US was published in 2021 and focused primarily on keyword-era ATS systems. The shift to embedding-based and LLM-based screening since then has not eliminated the problem. It has changed which candidates get filtered. Where keyword ATSes rejected anyone whose resume did not contain the right phrase, modern AI screeners reject candidates whose resume does not look statistically similar to the resumes the model was trained or prompted to favor. The rejection criterion is opaque, the candidate has no recourse, and the recruiter usually does not see the candidate at all. It is the same problem with better marketing.

- Does this mean small recruiting firms should not use AI for resume screening at all?

- It means small recruiting firms should not use AI to make the screening decision. Using AI to read a resume, extract structured facts (years of experience by skill, employment dates, location, certifications, gap patterns), and surface them to a recruiter is fast, accurate, and removes the keyword-matching busywork. Using AI to assign a fit score and auto-reject below a threshold is the part that produces the false negatives. The architecture matters more than the tool.

- Are there legal risks if my screener silently rejects qualified candidates from protected classes?

- Yes. The EEOC issued formal technical assistance in May 2023 confirming that Title VII applies to AI-based hiring tools, and that the employer is liable for disparate impact even when the tool is built and operated by a third-party vendor. If a vendor tells you their screener is bias-free, that promise has no legal weight. You are still the party who has to demonstrate validity if a complaint is filed. Most small recruiting firms are running screeners they could not defend in an audit, sourced from vendors whose contracts explicitly disclaim this exact liability.

- What does structured signal extraction look like in practice?

- You build a small workflow that takes the resume PDF, parses it, sends it to a language model with a strict schema (Anthropic SDK or OpenAI structured outputs both work), and asks for specific fields back. Years of relevant experience, last three job titles, technical skills with confidence rating, employment gaps with stated reasons, certification dates. The model returns JSON. The JSON drops into the ATS or a Google Sheet. The recruiter looks at the structured output beside the resume and decides whether to advance. The model never assigns a score, never auto-rejects, and never sees fields it does not need.

- How is this different from buying an off-the-shelf AI screener that already does this?

- The off-the-shelf screeners almost universally include a fit-score field and an auto-reject threshold, because that is what enterprise procurement teams expect to see. Once that field exists, recruiters use it. The score becomes the decision, not an input to the decision. A custom build leaves the score field out entirely. The recruiter sees facts, not a model's opinion of fit. That single architectural choice is the difference between a tool that filters out hidden workers and a tool that surfaces them.

- Will this slow my recruiters down compared to a fully automated screener?

- It can, on raw volume. A fully automated screener reading 1,000 resumes and surfacing 50 will always feel faster than a structured-extraction workflow that asks a recruiter to make 1,000 decisions. The honest tradeoff is that the automated screener is fast because it is wrong about a meaningful percentage of the candidates it rejects, and you do not see the cost. The structured workflow is slower because the recruiter is making the calls. For a 1-10 recruiter shop placing in the $80K to $200K range, the marginal cost of looking at one extra qualified candidate is small and the marginal value is large. The math is different at enterprise volume, which is part of why enterprise screeners exist.

- What about LinkedIn Recruiter and platforms with their own AI matching?

- Those are doing the same thing under a different name. LinkedIn's AI matching scores candidates against a job and sorts the results. The recruiter sees the top of the sort. Candidates below the cut do not appear. The keyword-era complaint that great candidates are invisible because their resume did not include the magic word is now an embedding-era complaint that great candidates are invisible because their LinkedIn profile is not statistically similar to the resumes that previously got hired. The structural problem has moved up a layer.

- If I am running on Bullhorn or Greenhouse, can I add structured-extraction screening without ripping out my ATS?

- Yes, and you should. Bullhorn and Greenhouse both have webhook and REST API support at standard tiers. The pattern is to trigger the extraction workflow on a new candidate event, post the structured output back to a custom field on the candidate record, and let the recruiter view it inside the ATS interface they already use. The ATS stays the system of record. The AI is a parser, not a decision-maker. A workflow like this takes a few days to build and runs on low three-figures per month in API and infrastructure cost depending on volume.

- How do I tell if my current AI screener has disparate impact?

- You run a backwards audit on the rejected pool. Take the candidates your screener filtered out over the last 90 days, segment them by the protected classes you can derive (gender from name, age from graduation year, geography), and look at whether the rejection rates differ from the advanced-pool rates by a margin that would not survive an EEOC review. The four-fifths rule from the Uniform Guidelines on Employee Selection Procedures is the rough threshold most counsel applies. If your rejected pool fails the four-fifths test, you have a problem regardless of what your vendor claims about their model.

Related reading

- Agentic coworker pattern: role, memory, standup

An AI coworker is not a chatbot that answers questions. It is an agent with a clear role, persistent memory of its own work, and a real slot in the team's cadence. Most teams build the first and skip the last two.

- Anthropic SDK vs LangChain for building Claude agents

LangChain is the default. LangGraph is the upgrade path. Both add abstraction you pay for in production. Here is when the Anthropic SDK is the shorter build, the cheaper runtime, and the faster debug loop for a Claude-only agent.

- Best CRM for small business 2026: the one thing to check

Every CRM comparison ranks features. None of them check whether the API at the tier you can afford will let your automation layer actually do anything. Here is the shortlist that does.