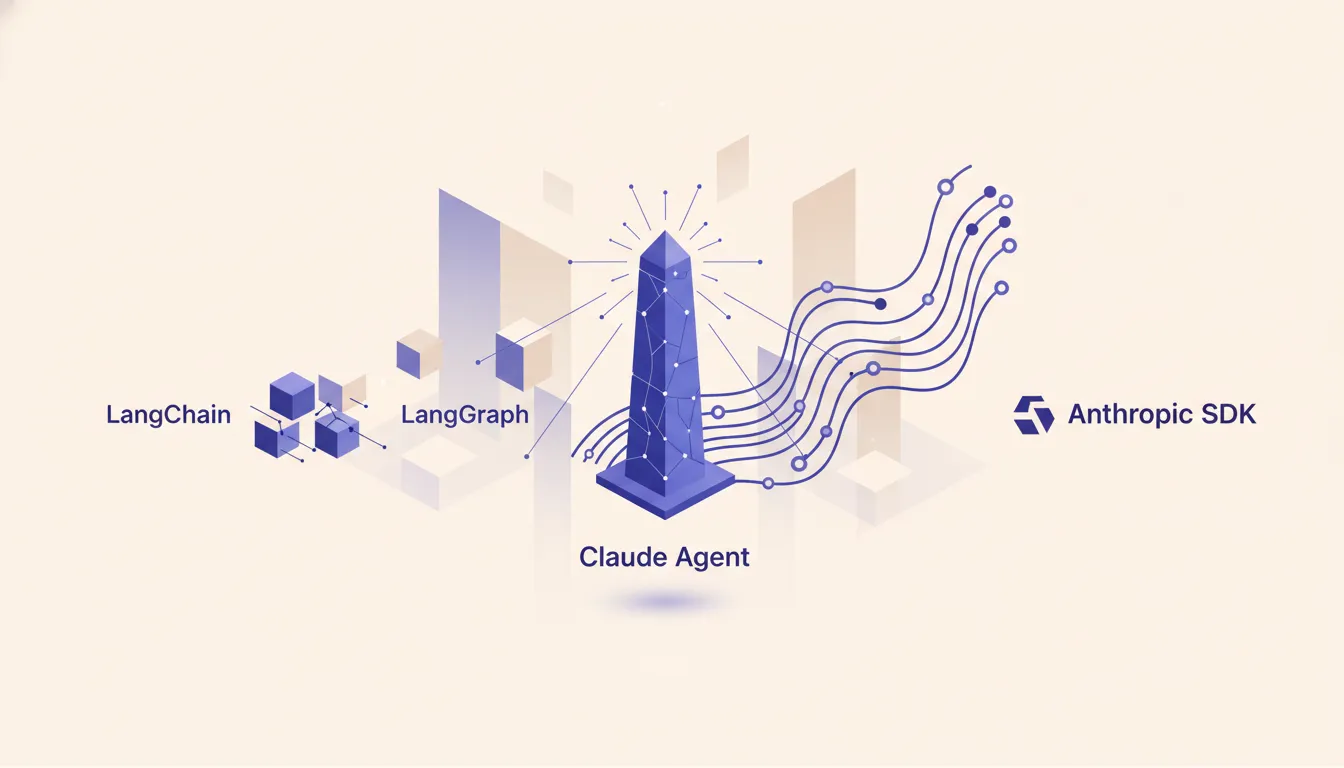

Anthropic SDK vs LangChain for building Claude agents

LangChain is the default. LangGraph is the upgrade path. Both add abstraction you pay for in production. Here is when the Anthropic SDK is the shorter build, the cheaper runtime, and the faster debug loop for a Claude-only agent.

--

Why LangChain is where every team starts

When you decide to build an LLM-powered agent, LangChain is usually the first name that comes up. That is not an accident. The framework abstracts over a dozen model providers behind a common interface, has an enormous community, and ships tutorials for almost every agent pattern you might want. If your goal is to get something working by end of day, LangChain is genuinely good at that.

When you decide to build an LLM-powered agent, LangChain is usually the first name that comes up. That is not an accident. The framework abstracts over a dozen model providers behind a common interface, has an enormous community, and ships tutorials for almost every agent pattern you might want. If your goal is to get something working by end of day, LangChain is genuinely good at that.

The provider-agnostic story is the real draw. You write your chain once and in theory you can swap from Claude to GPT-4o to Gemini with a config change. For teams evaluating multiple models or building demos for stakeholders who want to see options, that flexibility has real value. The LangChain Hub also gives you a library of pre-built prompts and chain templates. Getting from zero to a working proof of concept is faster with LangChain than it is writing raw API code.

The community compounds this. LangChain has been around long enough to have extensive Stack Overflow answers, blog posts, and YouTube walkthroughs for almost every failure mode you will encounter in early development. When you are moving fast and just need something that works, that ecosystem matters.

Where LangChain hits the wall

The abstraction that makes LangChain fast to start with is the same thing that makes it painful at production scale. Every LangChain version adds or changes internal interfaces. If you build on a specific version and need to upgrade for a bug fix or security patch, you routinely discover that behavior changed in ways the changelog did not clearly document. Teams running LangChain in production know this grind well: upgrade, something breaks, debug for a day, repeat.

The abstraction that makes LangChain fast to start with is the same thing that makes it painful at production scale. Every LangChain version adds or changes internal interfaces. If you build on a specific version and need to upgrade for a bug fix or security patch, you routinely discover that behavior changed in ways the changelog did not clearly document. Teams running LangChain in production know this grind well: upgrade, something breaks, debug for a day, repeat.

Debugging agent misbehavior is where the abstraction cost is highest. When your Claude agent calls the wrong tool, chains outputs incorrectly, or hallucinates a multi-step reasoning path, diagnosing why is much harder when you are looking through a LangChain lens. The framework adds layers between what you wrote and what actually hit the API. Stack traces go through internal LangChain plumbing before reaching your code. You end up reading framework source to understand what your agent actually sent to Claude.

The token accounting problem is subtler but also real. LangChain manages prompt assembly behind the scenes. When your production costs run high and you want to tighten prompts or add caching, the framework often obscures exactly what is being sent and when. Direct SDK calls make this transparent. Every message, every system prompt, every tool definition is exactly what you see in your code.

LangGraph improves LangChain but inherits the same problems

LangGraph is the more sophisticated sibling. It builds a state machine on top of LangChain where each node in a directed graph is a step in your agent flow and edges encode the routing logic. This is genuinely better architecture than a flat chain for complex multi-step agents. If you have an agent with five distinct stages, explicit branching conditions, and state that needs to persist across steps, a graph is a cleaner mental model than a linear chain.

The practical improvement is that LangGraph makes agent flow visible. You can draw the graph, trace which node executed when, and reason about what state looked like at each step. For workflows with real branching complexity, that debuggability is worth something.

But LangGraph still sits on top of LangChain, which means it inherits the version churn, the abstraction leaks, and the debugging friction. It also adds its own learning curve. The node-and-edge model requires thinking about your agent differently, and the documentation assumes you are already comfortable with LangChain concepts. For a team building a Claude-only agent that does not need multi-model routing, LangGraph is taking on overhead to solve a problem the Anthropic SDK handles directly and more simply.

What the Anthropic SDK gives you directly

The Anthropic SDK puts you one layer above the API with nothing in between. You define your tools as JSON schemas and pass them in the tools array. Claude returns structured tool calls you execute and feed back. The loop is explicit in your code: call API, handle response, execute tools, call API again. Nothing is hidden.

Tool use in the raw SDK is cleaner to reason about than LangChain's tool abstractions. You write the JSON schema once, wire up your Python or TypeScript function, and handle the tool_use content block directly. When something goes wrong, your stack trace goes to your code, not through framework internals. The cognitive overhead of understanding what your agent actually did drops significantly.

Streaming and prompt caching are the two SDK features that compound the advantage. Streaming lets you process tool call events as they arrive rather than waiting for a complete response, which matters for agents with long reasoning chains. Prompt caching lets you mark stable portions of your context, like tool definitions and system prompts, so Claude reads from cache on repeat calls. A production agent running dozens of queries per hour against the same tool definitions can see 80-90% cost reduction on those tokens. LangChain surfaces neither of these features cleanly because they are Claude-specific behavior the framework was not designed around.

When LangChain still wins

LangChain is genuinely the right tool when your architecture requires talking to multiple model providers. If you are building an evaluation harness that needs to run the same prompt against Claude, GPT-4o, and Gemini and compare outputs, LangChain's provider abstraction earns its overhead. Writing that comparison from scratch against three separate SDKs is real work. LangChain handles it.

Research and rapid prototyping across model families is another legitimate case. When your team is still deciding which model to build on and needs to move fast, LangChain's ecosystem of pre-built components is genuinely accelerating. The cost of framework complexity is lower when you are building a demo than when you are operating at production scale.

Evaluation pipelines using LangSmith also benefit from staying in the LangChain ecosystem. If your workflow already uses LangSmith for tracing and evaluation, migrating the evaluation layer to the raw SDK creates more work than it saves. The integration is tight enough that it is worth keeping if you are already invested.

A concrete migration: from LangChain to the Anthropic SDK

Consider a customer support agent built on LangChain. It classifies incoming tickets, routes to a tool that looks up order history, and drafts a response. The LangChain version uses a ConversationalRetrievalChain, a custom tool wrapper around the order lookup API, and an output parser that extracts the draft response from Claude's output.

Migrating to the Anthropic SDK starts by replacing the chain with an explicit loop. You define the order lookup as an Anthropic tool schema. You write the agentic loop: call Claude with the ticket in user turn and the tool definition in the tools array. If Claude returns a tool_use block, execute the lookup and add the result as a tool_result content block. Call Claude again with the updated message history. Loop until Claude returns a final text response with no pending tool calls.

What simplifies is substantial. You drop the output parser because Claude returns structured tool calls rather than trying to extract intent from raw text. The routing logic becomes visible in your Python rather than hidden inside a chain class. Adding prompt caching to the system prompt means the token cost for every subsequent ticket in the same session drops sharply. The full migration for a single-agent LangChain app typically runs 1-3 days. You write more code but you own it completely.

Extended thinking and caching: two SDK features LangChain does not expose cleanly

Extended thinking is a Claude-specific capability that lets you allocate a budget of thinking tokens before Claude produces a visible response. For complex agent tasks, this is a meaningful quality improvement. Claude reasons through the problem before committing to a path. The SDK exposes this as a thinking parameter on the messages call with a budget_tokens value. LangChain has no clean path to this because the parameter is not part of any shared provider interface.

Prompt caching works by marking message blocks with cache_control: {"type": "ephemeral"}. The caching documentation covers the rules for what can be cached and how long it persists. For agents with large tool definitions or long system prompts, the economics are compelling. A tool definition block that costs 2,000 tokens per call costs nearly nothing after the first call in a cache window. If you are running an agent that serves 50 requests per hour, caching the tool definitions alone can meaningfully cut your API bill.

Neither of these features is fundamentally incompatible with using LangChain as a higher-level wrapper. But in practice, framework abstractions often do not pass through provider-specific parameters cleanly. You end up hacking around the abstraction to get to the raw API call, which defeats the purpose of using the framework. If you know you want extended thinking and caching, starting with the SDK is simpler than fighting a framework that was not built for them.

When to reach for Claude Code instead of building an agent at all

There is a class of tasks where the right answer is not "build a custom agent" but "use Claude Code directly." If the work is terminal-adjacent, involves reading and editing files in a repository, or requires running commands and interpreting output, Claude Code is often the correct layer. It ships with the tool definitions, the agentic loop, and the repo-awareness already built. You are not adding a framework, you are using a purpose-built tool for that category of work.

The signal is whether your agent's primary environment is a codebase or a file system. A customer support agent that queries an API and drafts responses belongs in a custom SDK-built loop. A coding assistant that reads files, proposes changes, and runs tests belongs in Claude Code. When you see teams building custom agents in LangChain or raw SDK to do what is essentially file-editing and terminal work, they are building something Claude Code already does better. Know the boundary and you avoid a significant amount of unnecessary engineering. For MCP server development and other tool-connected workflows, the same principle applies: check what already exists before building the plumbing yourself.

Building a custom Claude agent? Run the AI Operations X-Ray.

Frequently asked questions

- Does LangChain still make sense in 2026?

- Yes for multi-provider routing and rapid prototyping across models. For a Claude-only production agent the framework overhead often costs more than it saves.

- What does LangGraph add over LangChain?

- State machines. Explicit node-and-edge graphs for stateful agent flows. Useful when your agent has clear branching stages and you want the flow diagrammed in code.

- Can I use the Anthropic SDK with streaming and tool use at the same time?

- Yes. The SDK supports streaming responses alongside tool calls. You handle the event stream directly which gives tighter control than LangChain abstractions.

- How hard is it to migrate from LangChain to the Anthropic SDK?

- Depends on surface area. A single-agent LangChain app usually ports in 1-3 days. A multi-chain LangGraph workflow takes longer because you rebuild the state machine by hand.

- What about OpenAI Agents SDK or other Anthropic-specific frameworks?

- OpenAI Agents SDK is the OpenAI equivalent path. Anthropic ships the SDK plus Claude Code as the agent layer. For Claude specifically those two cover most needs.

Related reading

- Claude skills vs tools vs MCP: which to reach for

Claude ships three overlapping ways to extend what an agent can do: skills, custom tools, and MCP servers. They solve different problems and most teams pick the wrong one first.

- Claude Code as a team force multiplier

Claude Code is not Cursor with a bigger context window. It is the first AI product that ships durable team-level leverage instead of per-seat chat speedup. Here is what actually changes when a team adopts it.