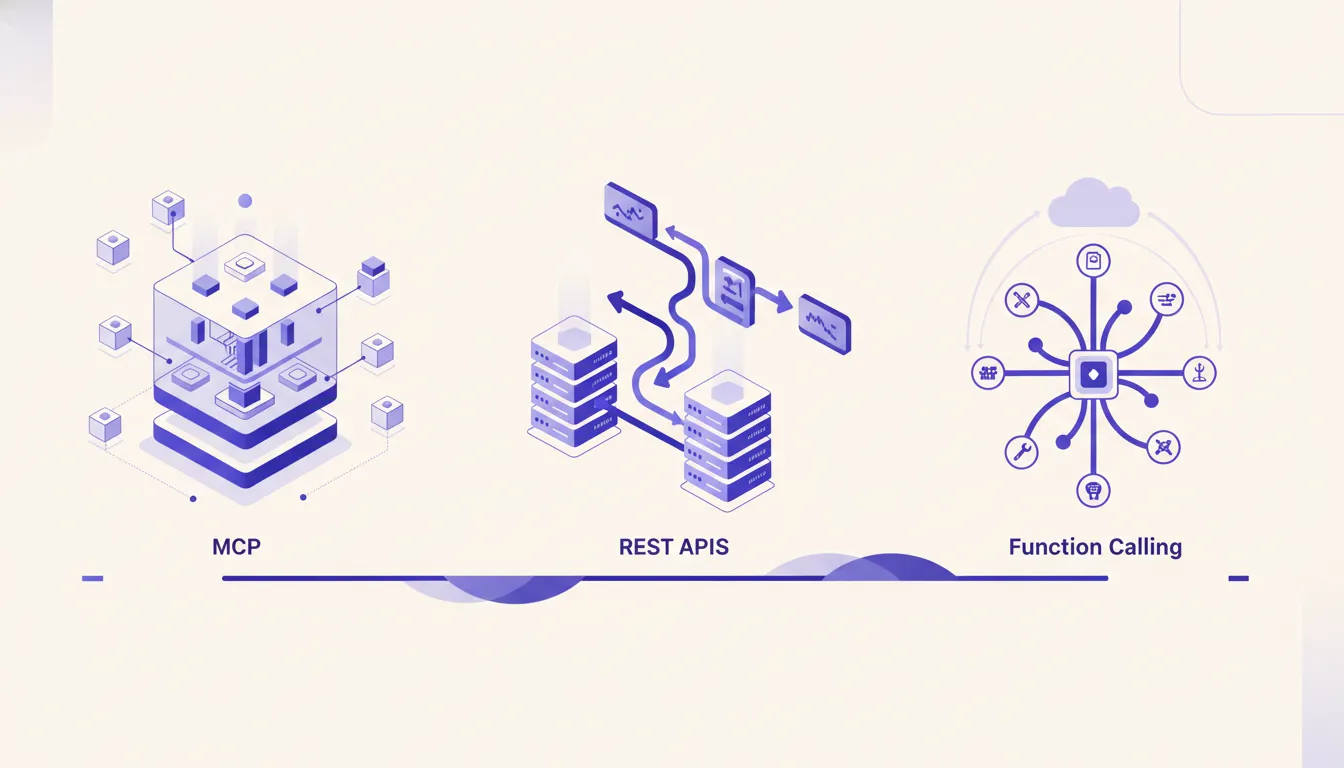

MCP vs REST vs function calling: which to use

Three abstractions expose data and actions to an LLM. They look interchangeable. They are not. Here is the decision tree.

--

Why these three abstractions get confused

REST APIs, function calling, and the Model Context Protocol all do a version of the same thing: they expose data or actions so something else can use them. That surface-level similarity is why developers treat them as interchangeable. They are not, and picking the wrong one creates real overhead down the line.

REST APIs, function calling, and the Model Context Protocol all do a version of the same thing: they expose data or actions so something else can use them. That surface-level similarity is why developers treat them as interchangeable. They are not, and picking the wrong one creates real overhead down the line.

The confusion sharpens when you are building an AI feature for the first time. You know your data lives behind an API. You know the LLM needs access to it. And you have three paths in front of you with no obvious signpost. The right question is not "which is better?" It is "who is the caller, where does the code run, and how reusable does this need to be?" Answer those three questions and the decision mostly makes itself.

REST APIs: old workhorse, still right sometimes

REST is not an AI abstraction. It is a general-purpose pattern for exposing resources over HTTP. Services call it. Dashboards call it. Cron jobs call it. Other APIs call it. The caller is another piece of software, not a language model making ad-hoc decisions about what to invoke.

REST is not an AI abstraction. It is a general-purpose pattern for exposing resources over HTTP. Services call it. Dashboards call it. Cron jobs call it. Other APIs call it. The caller is another piece of software, not a language model making ad-hoc decisions about what to invoke.

That distinction matters. REST is designed around predictable, structured requests. You send a GET to /orders/{id} and you get a JSON order back. You send a POST to /orders with a body and a new order is created. The caller knows the schema, knows the endpoint, and makes an explicit decision to call it. There is no ambiguity, and that is the point.

REST remains right in AI architectures when the LLM is not the direct caller. If your AI pipeline uses an orchestration service that calls a REST endpoint to fetch data before constructing a prompt, that is a perfectly valid pattern. The service is the caller, not the model. REST is also the right interface when you have non-LLM clients, webhook receivers, and mobile apps all hitting the same backend. You are not going to rewrite your entire API surface for one AI use case. Nor should you.

Function calling: the LLM-native single-app pattern

Function calling is what happens when you want an LLM to decide at runtime which tool to invoke, with which arguments, based on what the user asked. You define a set of tools in your application, pass them in the API request alongside the conversation, and Claude returns a structured tool call when it determines a tool is needed. Your application executes the call, returns the result, and the conversation continues.

This is the right pattern for one app, one AI session. You are building a chatbot that can look up customer records, or a code assistant that can read files, or an internal tool that queries a database. The tools are defined in your application. They live in your codebase. They run in your process. Nothing outside your app ever needs to know those tools exist.

Function calling is lightweight by design. There is no separate server to deploy, no protocol handshake, no client configuration. You write the tool definitions, pass them with the API call, and handle the results. The Anthropic tool use docs cover the full schema. For single-application use cases, this is the fastest path from idea to working integration. The moment you need those same tools accessible from Claude Desktop, from a teammate's Claude Code session, or from a second application you are building, function calling starts showing its limits.

MCP: reusable contract across LLM clients

The Model Context Protocol is a standardized interface between LLM clients and the systems they need to access. An MCP server exposes tools, resources, and prompts. Any MCP-compatible client, Claude Desktop, Claude Code, or any other client that implements the spec, can connect to that server and use those tools without you writing separate integrations for each one.

The key difference from function calling is that the tools live outside any single application. They live in a server you deploy and maintain independently. When a team member opens Claude Desktop to do research and another opens Claude Code to write code, both have access to the same tools. If you add a new tool to the server, every connected client gets it automatically. The tool definitions are not scattered across multiple app codebases. They have one home.

modelcontextprotocol.io maintains a registry of servers covering Slack, GitHub, Google Drive, Postgres, and dozens of other common tools. If your use case is covered, start there before building anything custom. When it is not, a custom MCP server is the right answer, and the investment pays back across every AI client your team uses. The MCP specification is stable enough that servers you build today work when you upgrade your Claude client.

The decision tree

Five rules that cover most situations:

- If the caller is not an LLM (a service, a cron job, a webhook), use REST.

- If only one application needs the tool and only inside active AI sessions, use function calling.

- If two or more Claude clients (Desktop, Code, a custom app) need the same tool, use MCP.

- If team members other than you will use the same AI tools independently, use MCP.

- If you already have a REST API and want to give Claude access to it quickly, wrap it in a thin MCP server rather than rebuilding.

These rules are not mutually exclusive. A system can have all three layers operating at the same time, each doing the job it is actually suited for.

When to combine them

The most robust AI architectures layer all three abstractions. REST handles service-to-service traffic and non-LLM clients. Function calling handles the ad-hoc tool use that is tightly scoped to one application session. MCP handles the shared tooling layer that all Claude clients draw from.

The SEO content engine case study is a good example of this layering in practice. The content operation runs REST endpoints for campaign management, scheduling, and analytics, called by workflow automation services on a schedule. When a Claude-powered workflow needs to look up keyword data or check a page's current ranking, it does that through an MCP server that the entire team also uses in Claude Desktop for ad-hoc research. And inside specific content generation sessions, function calling handles tool use that is scoped to that session only, like formatting a draft or validating a URL structure.

The practical lesson from that build is that you should not try to force one abstraction to do all three jobs. REST becomes awkward when you want LLM-driven tool selection. Function calling becomes a maintenance burden when you are duplicating tool definitions across five apps. MCP is overkill when you have one session and one app that needs one tool. Respect what each abstraction is designed for, and the architecture stays clean.

Cost and maintenance tradeoffs

Function calling has near-zero infrastructure cost. It lives in your application code. When you change a tool definition, you deploy your app. The downside is that tool definitions drift across applications unless you are disciplined about sharing them through a library. In practice, most teams are not that disciplined, and after six months you have three slightly different versions of the same tool scattered across three codebases.

MCP has infrastructure cost. You are running a server, monitoring it, updating it, and managing its deployment. For a team of one or two, that overhead is real. A small VM at $10 to $20 per month covers it, but you also need a deployment pipeline, a way to restart the server when it crashes, and documentation so your teammates understand what the tools do. The payback is a single source of truth for all AI tooling across every client and every team member.

REST has the highest baseline cost because you are building and maintaining a full API surface. But you already have that cost if you are running any kind of service-oriented architecture. The question is whether to expose that REST API directly to Claude, wrap it in an MCP server, or both. Wrapping is usually the right call when multiple Claude clients need access. The MCP layer adds a small translation overhead but saves you from writing client-specific integration code for every new Claude surface you add.

One pitfall: building MCP when function calling would do

The most common mistake in AI tooling is over-engineering early. MCP is newer and generates more interest right now, so developers reach for it by default. But if you are building a feature for one app, for one session, for one use case, function calling ships faster and is easier to debug.

Building an MCP server has real upfront cost: design the tool schemas, stand up the server, configure auth, write documentation, set up monitoring. For a single-app use case that might never need to be shared, that cost is pure waste. The rule is simple: start with function calling. When you find yourself copy-pasting tool definitions between projects, or wishing a teammate could use the same tools in their own Claude session, that is the signal to extract them into an MCP server. Build for the stage you are at, not the stage you might reach.

Not sure which your use case needs? Run the AI Operations X-Ray.

Frequently asked questions

- Is MCP going to replace REST APIs?

- No. REST stays right for machine-to-machine integrations and for non-LLM clients. MCP is an addition, not a replacement.

- Can my existing REST API be wrapped as an MCP server?

- Yes. A thin MCP server that translates tool calls into REST calls is often the fastest path to give Claude access to an API you already run.

- When is function calling enough vs custom MCP?

- Function calling is enough when only one app and one AI session needs the tool. MCP is worth it when multiple Claude clients or team members will share the same tools.

- Does MCP work with models other than Claude?

- MCP is an open protocol. Any client that implements the spec can use a server. Most adoption is in Anthropic products today with broader support emerging.

- What about LangChain tools?

- LangChain tools solve a similar problem but stay inside the LangChain app lifecycle. MCP is a cross-client standard instead of a framework-specific abstraction.

Related reading

- Ahrefs + Google Ads MCP that saved 52 hours a week

A content agency was spending 3 hours per client per week on Ahrefs audits. An MCP server turned those 3 hours into 20 minutes per account and unblocked 5 more AI use cases in the process.

- Your first custom MCP server: when it's worth building

The official Anthropic MCP registry has servers for Slack, GitHub, Google Drive, and dozens more. Custom only makes sense in specific cases. Here are the 5 signals that custom is the right call.